The previous post was a method to find the average polygons of a certain number of sides, assuming that one defines a polygon by its interior angles and edge lengths.

If we just define shapes by their edge length then we can obtain average triangles and also average tetrahedrons. Without loss of generality, we pick randomly distributed edge lengths which sum to 1.

For triangle edge lengths a,b,c, we get the means (shown over 4 independent trials):

a,b,c: 0.4, 0.2, 0.4

An isosceles triangle.

when we include reflections, we get an obtuse triangle:

a,b,c: 0.444, 0.361, 0.194

For tetrahedrons we pick 6 edge lengths, and throw out any invalid tetrahedrons. Those being any with faces that disobey the triangle inequality (sum of shortest sides must be greater than longest side) or disobey the tetrahedron inequality (sum of smallest face areas must be greater than the largest face area).

With these remaining tetrahedra, we find the closest match (dot product of the vector of edge lengths) for all relative orientations. For tetrahedra that is 12 orientations. We convert the mean edge lengths into coordinates like so:

Under this system, the mean tetrahedron is fairly unstable, but seems to look a bit like this:

a,b,c,d,e,f: 0.157258, 0.184638, 0.178683, 0.143683, 0.16418, 0.171558

r,s,t,u,v: 0.115537, 0.144023, 0.113931, 0.035557, 0.123238

coords: (0,0,0), (0.179,0,0), (0.116, 0.144,0), (0.114, 0.0356, 0.123)

If we allow reflection when matching shapes, then the result look more like this:

a,b,c,d,e,f: 0.195577, 0.184195, 0.126073, 0.159834, 0.193624, 0.140698

r,s,t,u,v: 0.0458932, 0.178386, 0.0402287, 0.0351516, 0.130161

coords: (0,0,0), (0.126,0,0), (0.0459, 0.178,0), (0.0402, 0.0352, 0.130)

Here it is top down:

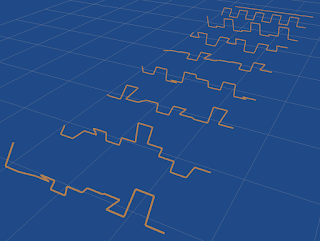

CuboidsFor normal right-angled cuboids, we know from the mean signals post that the mean edge lengths must be proportional to 2, 5 and 11:

This is because the cuboid has no further constraints on its edge length, and its symmetries are the same as a length 3 sequence with reflection allowed.